A Framework for AI and Community Strategy

Executives are asking every team to experiment with AI. Here's a framework to help senior community leaders and sponsoring executives.

At last month’s Structure.Community Winter Summit, I watched thirty senior community leaders map their current AI experiments onto a wall. The clustering was striking. Most of the activity concentrated in the same few areas: moderation, search, content drafting, basic analytics. These are useful applications, and several teams are getting real results from them. But they represent a narrow slice of what’s possible.

When we shifted the exercise to “what would you build if constraints were removed,” the ideas expanded dramatically, into business intelligence, personalized member journeys, advocacy discovery, cross-platform listening, and more. The gap is a strategic frame. Without one, teams default to whatever their platform vendor ships or whatever their most technically curious team member experiments with on a Friday afternoon.

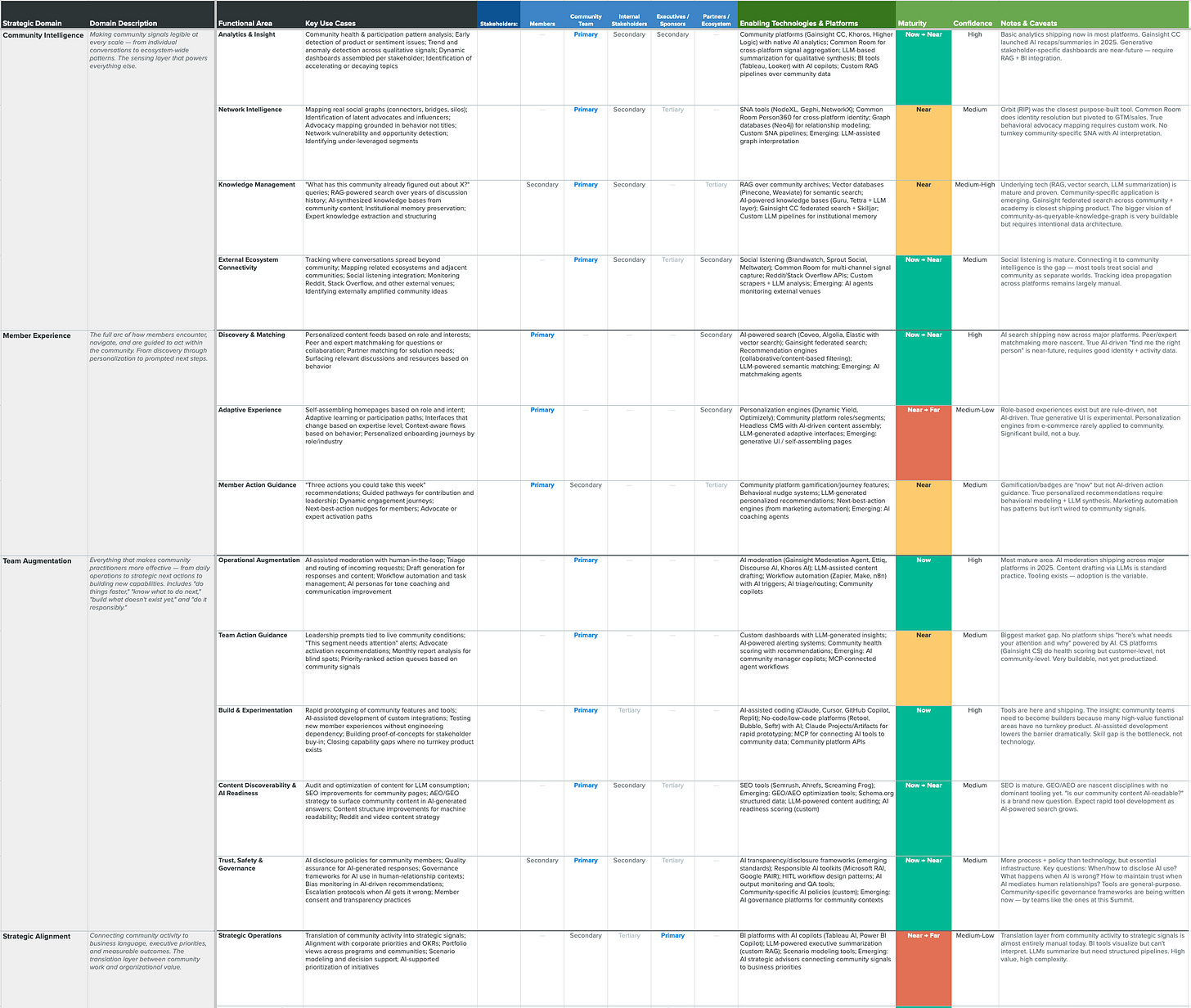

I built the Community + AI Framework to address that gap. It organizes the full range of AI opportunities in community into six strategic domains, each with specific functional areas underneath. The goal is to help community leaders see the whole board, identify where they are today, and make deliberate choices about where to invest next.

The framework and a companion pilot design worksheet are available to download at the end of this piece. What follows is a walk-through of each domain and why it matters.

Community Intelligence

Making community signals legible, from individual conversations to ecosystem-wide patterns.

This is the foundation. Every other domain depends on it.

Community intelligence is about making the signals in your community legible, from individual conversations to ecosystem-wide patterns. The functional areas here range from health monitoring and trend detection to network intelligence (mapping who’s actually connected to whom, where the bridges and silos are) to knowledge management, the ability to query years of community discussion history as a structured knowledge asset.

Most teams have some version of basic analytics in place. Platform dashboards show activity metrics, and several vendors shipped AI-powered summaries and recaps in 2025. But the more ambitious applications, like behavioral advocacy mapping or treating community as a queryable knowledge graph, require intentional data architecture and often custom work. The technology is mature enough. The application to community is still emerging.

Member Experience

How members discover, connect, and are guided toward their next meaningful action.

This domain covers how people find content and connections, how the experience adapts to who they are, and how they’re guided toward meaningful next steps.

AI-powered search and content recommendations are shipping now across major platforms, and the improvements are real. One of the more interesting functional areas here is peer and expert matchmaking, using AI to connect members with the right people rather than just the right content. Several teams at the summit described this as a persistent member request that they haven’t been able to address with existing tools.

Member action guidance is another area worth attention. Gamification and badges have been around for years, but AI-driven recommendations (”here are three things you could do this week based on your activity and interests”) require behavioral modeling that most community platforms don’t yet support. The pattern exists in marketing automation. It hasn’t been wired to community signals yet.

Team Augmentation

Helping community teams work faster, know what’s next, build what’s missing, and do it responsibly.

This is where most current AI experimentation lives, and for good reason. AI moderation, content drafting, triage and routing, and workflow automation are the most mature applications in the space. The tooling exists. Adoption is the variable.

But team augmentation extends well beyond operational efficiency. One of the most significant functional areas here is what I’m calling build and experimentation: community teams using AI-assisted development to prototype features, build custom integrations, and close capability gaps where no turnkey product exists. Many of the highest-value opportunities in this framework have no off-the-shelf solution. Community teams that develop the ability to build, even at a basic level, will move faster than those waiting for vendors to catch up.

Content discoverability and AI readiness is becoming urgent. AI search engines are increasingly citing user-generated and social content as authoritative sources. Reddit has been a top-cited domain in AI-generated answers for over a year. And LinkedIn recently surged from around #11 to #5 in ChatGPT’s domain rankings, with posts, articles, and newsletters now accounting for roughly 35% of all LinkedIn citations in AI search responses. The implication for community teams: the content your members create, and the way it’s structured for machine readability, directly shapes whether your community shows up when AI answers questions about your industry, your products, or your competitors. Most community content was never built with this in mind. The teams that audit and optimize for it now will have a significant head start.

Trust, safety, and governance is the final functional area in this domain, and it’s more about process than technology. AI disclosure policies, quality assurance for AI-generated responses, and governance frameworks for AI use in human-relationship contexts are all essential infrastructure that most teams are still writing.

Strategic Alignment

Connecting community activity to business language, executive priorities, and measurable outcomes.

This is where community work gets translated into organizational value.

The functional areas here include translating community activity into strategic signals that align with corporate priorities, and ROI and value translation, the ongoing work of linking community impact to pipeline, retention, and financial metrics. Basic community ROI reporting (deflection, engagement) is available now. The harder work of pipeline and retention attribution requires CRM integration and organizational buy-in that most teams are still pursuing.

The near-future unlock is AI-generated impact narratives. LLMs are well-suited to synthesizing structured community data into stakeholder-ready summaries. But the prerequisite is structured data, which brings us back to the integration challenge that surfaced throughout the summit. The teams that have invested in connecting their data to business systems are the ones whose value story practically tells itself.

One gap the summit exposed in this domain: LLM traffic. Multiple teams are seeing enormous request volumes from AI crawlers consuming their community content. AI systems are treating community-generated knowledge as a trusted source of human-validated information, and that trust was built over years of investment. But no one in the room had a standard way to measure, value, or report it. Content discoverability (in Team Augmentation) addresses the “make it findable” side. What’s missing is the value translation: how do you account for the fact that your community content is training and informing AI systems at scale, and what is that worth? This is an open question the industry hasn’t answered yet, and it belongs squarely in the strategic alignment conversation with leadership.

Innovation and Foresight

Beyond ideation toward shared sensemaking, futures work, and long-horizon exploration.

This is the least explored domain in the framework, and potentially the most valuable for organizations thinking beyond the next quarter.

Innovation and foresight moves past traditional ideation (submit an idea, vote on it) into shared sensemaking and long-horizon exploration. Think community-based futures workshops, high-trust collaboration spaces where customers and partners work alongside product teams, and AI-assisted trend synthesis grounded in real ecosystem signals rather than analyst reports.

I’ve written about the failure patterns of crowdsourced innovation elsewhere in this series. The short version: platforms that treat communities as idea mines consistently fail. The organizations that succeed are the ones that build collaborative infrastructure and relationship-rich environments where problems are shaped, judgment develops, and the community itself becomes a strategic sensing mechanism. AI makes this more powerful by enabling synthesis at a scale previously impossible, but the foundation remains human trust and relationship infrastructure.

Enterprise Integration

The connective tissue that makes community data actionable, where decisions actually get made.

Enterprise integration was the single biggest frustration at the summit. Almost every ambitious idea required connecting community data to systems that community teams don’t control. The barrier is organizational, not technical.

One development worth watching: the Model Context Protocol (MCP), an open standard for connecting AI systems to data sources, was adopted by major AI providers and donated to the Linux Foundation in late 2025. It’s becoming an industry standard, and community-specific MCP servers are an emerging opportunity. The ability to pull community data into AI-powered analytical and operational workflows, without building custom integrations from scratch, could meaningfully change the integration picture over the next year.

From framework to action

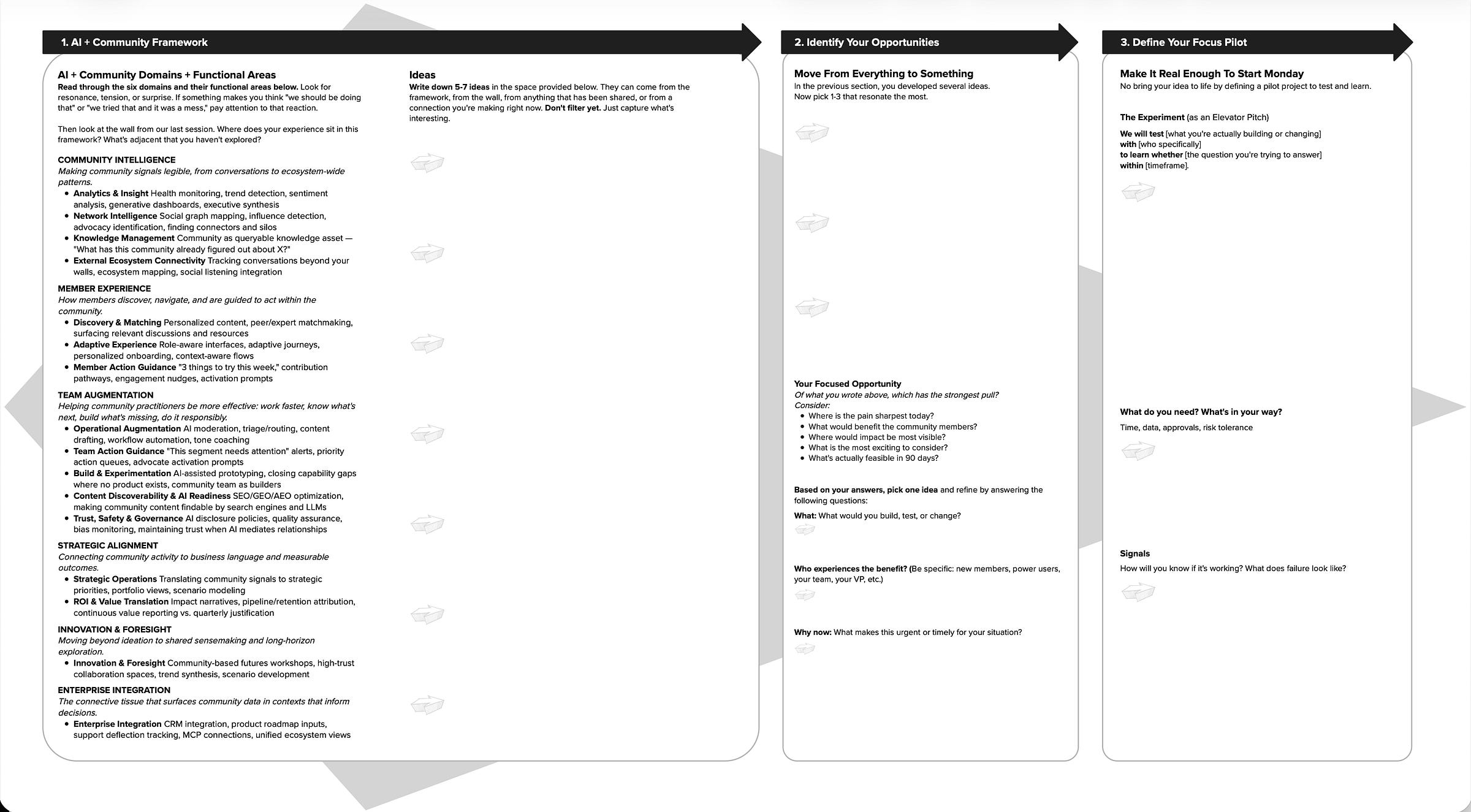

A framework is only useful if it leads to decisions. At the summit, I introduced a companion worksheet designed to move community leaders from orientation through opportunity identification to a concrete pilot plan.

The worksheet works in three stages. First, you read through the six domains and their functional areas and notice where you feel resonance, tension, or surprise. If something makes you think “we should be doing that” or “we tried that and it was a mess,” pay attention to that reaction. Second, you identify five to seven ideas and then filter down to the one or two with the strongest pull, considering where the pain is sharpest, what would benefit members most, where impact would be most visible, and what’s feasible in 90 days. Third, you design a pilot: an elevator pitch for what you’re testing, who benefits, what you need, and how you’ll know if it’s working.

The worksheet includes a specific format for the pilot pitch: “We will test [what you’re building or changing] with [who specifically] to learn whether [the question you’re trying to answer] within [timeframe].” That specificity matters. It’s the difference between “we should do something with AI” and a plan you can start on Monday.

Both the framework and the worksheet are available for download here (short registration). The best way to say “Thank You” for access is to like and share this post!

Thanks to the members of the Structure.Community network for giving feedback on early versions of the worksheet and framework, especially Chris Catania from ESRI.

The Community + AI Framework (V3) and the AI Pilot Design Worksheet are free to use and share. If you’d like to discuss how they apply to your specific program, reach out at bill.johnston@structure3c.com.